FlyEM Hemibrain V1.1 Release

Bill Katz

Senior Engineer @ Janelia Research CampusVersion 1.1 of the Hemibrain dataset has been released. We suggest you familiarize yourself with the dataset and tools to access it through the initial discussion in our previous V1.0 release post. This post provides updated links and descriptions.

neuPrint

The neuPrint Explorer should be most visitors' first stop, and it has been updated with the V1.1 data and sports enhancements since last January.

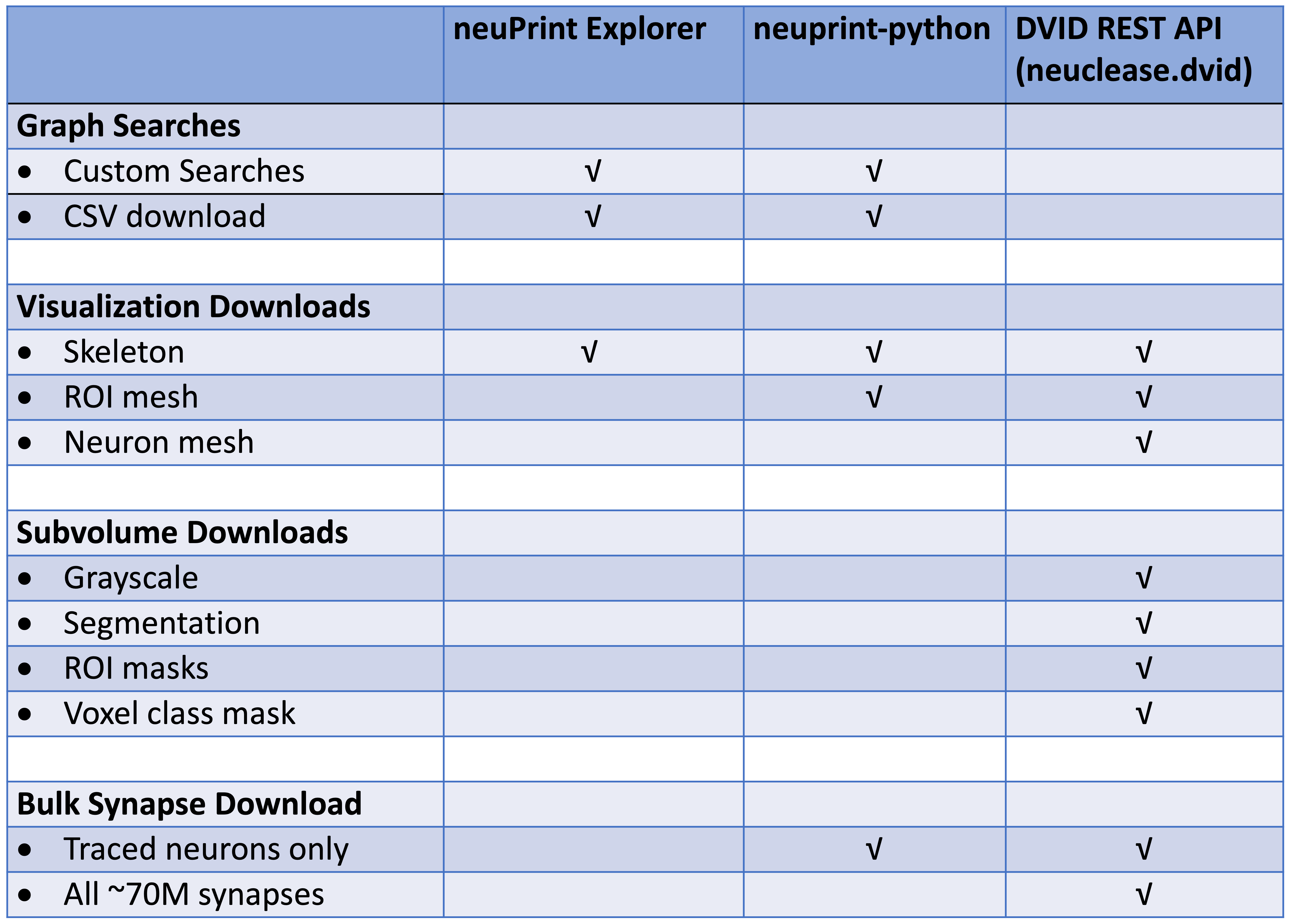

For programmatic access to the neuPrint database, see the neuprint-python library. For power-users who need access to the DVID database (see last section), you can try the neuclease.dvid python bindings.

This table differentiates each library based on the types of requests they support:

Downloads

From the 26+ TB of data, we can generate a compact (45.5 MB) data model containing the following:

- Table of the neuron IDs, types, and instance names.

- Neuron-neuron connection table with synapse count between each pair.

- Same as above but each connection pair is split by ROI in which the synapses reside.

You can download all the data injected into neuPrint (excluding the 3D data and skeletons) in CSV format.

Pending ... The skeletons of the 21,663 traced neurons are available as a tar file. Included is a CSV

file traced-neurons.csv listing the instance and type of each traced body ID.

Neuroglancer Precomputed Data

Here's a link to viewing the v1.1 dataset directly in your browser with Google's Neuroglancer web app.

The hemibrain EM data and proofread reconstruction is available at the

Google Cloud Storage bucket gs://neuroglancer-janelia-flyem-hemibrain

in the Neuroglancer precomputed

format

for interactive visualization with

Neuroglancer and

programmatic access using libraries like

Cloudvolume (see below).

You can also download the data directly using the Google gsutil tool (use the -m option, e.g., gsutil -m cp -r gs://bucket mydir

for bulk transfers).

To parse the data, use one of the software libraries below or you'll have to write software to parse data using the format specification linked above.

Available data:

- EM data

- Original aligned stack (at original 8x8x8nm resolution, as well as downsamplings)

gs://neuroglancer-janelia-flyem-hemibrain/emdata/raw/jpegJPEG format - CLAHE applied over YZ cross sections (at original 8x8x8nm resolution, as well as downsamplings)

gs://neuroglancer-janelia-flyem-hemibrain/emdata/clahe_yz/jpegJPEG format

- Original aligned stack (at original 8x8x8nm resolution, as well as downsamplings)

- Segmentation

- Volumetric segmentation labels (at original 8x8x8nm resolution, as well as downsamplings)

gs://neuroglancer-janelia-flyem-hemibrain/v1.1/segmentationNeuroglancer compressed segmentation format

- Volumetric segmentation labels (at original 8x8x8nm resolution, as well as downsamplings)

- ROIs

- Volumetric ROI labels (at 16x16x16nm resolution, as well as downsamplings)

gs://neuroglancer-janelia-flyem-hemibrain/v1.1/roisNeuroglancer compressed segmentation format

- Volumetric ROI labels (at 16x16x16nm resolution, as well as downsamplings)

- Synapse detections

- Indexed spatially and by pre-synaptic and post-synaptic cell id

gs://neuroglancer-janelia-flyem-hemibrain/v1.1/synapsesNeuroglancer annotation format

- Indexed spatially and by pre-synaptic and post-synaptic cell id

- Tissue type classifications

- Volumetric labels (at original 16x16x16nm resolution, as well as downsamplings)

gs://neuroglancer-janelia-flyem-hemibrain/mask_normalized_round6Neuroglancer compressed segmentation format

- Volumetric labels (at original 16x16x16nm resolution, as well as downsamplings)

- Mitochondria detections

- Volumetric labels (at original 16x16x16nm resolution, as well as downsamplings)

gs://neuroglancer-janelia-flyem-hemibrain/mito_20190717.27250582Neuroglancer compressed segmentation format

- Volumetric labels (at original 16x16x16nm resolution, as well as downsamplings)

Tensorstore

Earlier this year, Google released a new library for efficiently reading and writing large multi-dimensional arrays. At this time, there are C++ and python APIs. An example of reading the hemibrain segmentation is in the Tensorstore documentation.

CloudVolume

The Seung Lab's CloudVolume python client allows you to programmatically access Neuroglancer Precomputed data. CloudVolume currently handles Precomputed images (sharded and unsharded), skeletons (sharded and unsharded) and meshes (unsharded legacy format only). It doesn't handle annotations at the moment, which are usually handled by whatever proofreading system a given lab uses.

Full Datasets with DVID

For those users who want to download and forge ahead on their own copy of our reconstruction data, you can download a replica of our production DVID system and the full Hemibrain databases.

You can quickly download a relatively small DVID executable which then allows access to grayscale data stored in the cloud, both in JPEG and raw format. All other data can be downloaded by type (e.g., synapses, ROIs, segmentation, etc.) so you can choose what you need.

Please refer to the Hemibrain DVID release page for download information. This is currently the v1.0 data but will be updated to the v1.1 data shortly.

Please drop us a note if you are running your own fork so we can keep you apprised of continuing work, documentation, and opportunities to push back your changes to the public server.

NeuTu

NeuTu is a proofreading workhorse for the FlyEM team and can be used to proofread with DVID. It allows users to observe segmentation and to split or merge bodies if necessary. It also permits annotation, ROI creation, and many other features. Please visit this NeuTu documentation page for how to setup DVID with the Hemibrain dataset as described below.